🧠 Context: Why This Matters Now

We're at a critical inflection point in artificial intelligence development. According to Morgan Stanley's latest report, we could see a major AI breakthrough in the first half of 2026—but this advancement comes with a staggering infrastructure cost that could strain power grids to their breaking point. Meanwhile, Apple just announced the M5 MacBook Air with expanded AI capabilities, bringing powerful AI tools directly to consumers' laps. These two developments represent the dual nature of our AI future: unprecedented capability paired with unprecedented energy demands.

The timing couldn't be more significant. Residential electricity prices in the U.S. have risen by more than 36% since 2020, from 12.76 cents per kilowatt-hour to 17.44 cents per kilowatt-hour in February 2026, with projections hitting 19.01 cents by September 2027. As AI companies dramatically increase computing power, we're facing a fundamental question: Can our energy infrastructure support the AI revolution, or will power constraints become the new bottleneck?

🧠 Deep Dive: 5 Key Insights with Evidence

1. The Scaling Law Still Holds—And That's the Problem

Morgan Stanley's analysis confirms that AI's "scaling laws" continue to hold true: more computing power equals more intelligence. Elon Musk recently argued that applying ten times more compute to training large language models could effectively double their intelligence. The bank agrees—future models could improve rapidly if computing resources keep expanding.

Evidence: OpenAI's GPT-5.4 'Thinking' model reportedly achieved an 83% score on the GDPVal benchmark, a test designed to evaluate AI performance on economically valuable tasks. This indicates AI systems are beginning to approach expert-level performance in certain domains.

2. The 2026 Breakthrough Timeline Isn't Science Fiction

Investment bank Morgan Stanley has warned that a major breakthrough in artificial intelligence could arrive in the first half of 2026. This prediction isn't based on wishful thinking but on observable trends in computing power expansion at leading AI labs.

Evidence: Executives from major AI companies are reportedly signaling to investors that upcoming model releases may deliver performance improvements beyond current expectations. The rapid compute growth could speed up AI progress faster than many policymakers, industries, and infrastructure providers are prepared for.

3. Self-Improving AI Systems Could Emerge by 2027

Jimmy Ba, co-founder of Elon Musk's AI company xAI and a professor at the University of Toronto, suggests that recursive self-improving AI systems could begin appearing as early as the first half of 2027 if current development trends continue.

Evidence: The concept of recursive self-improvement—where AI systems help design and improve future versions of themselves—could accelerate technological progress significantly because systems would no longer rely entirely on human researchers to upgrade their capabilities.

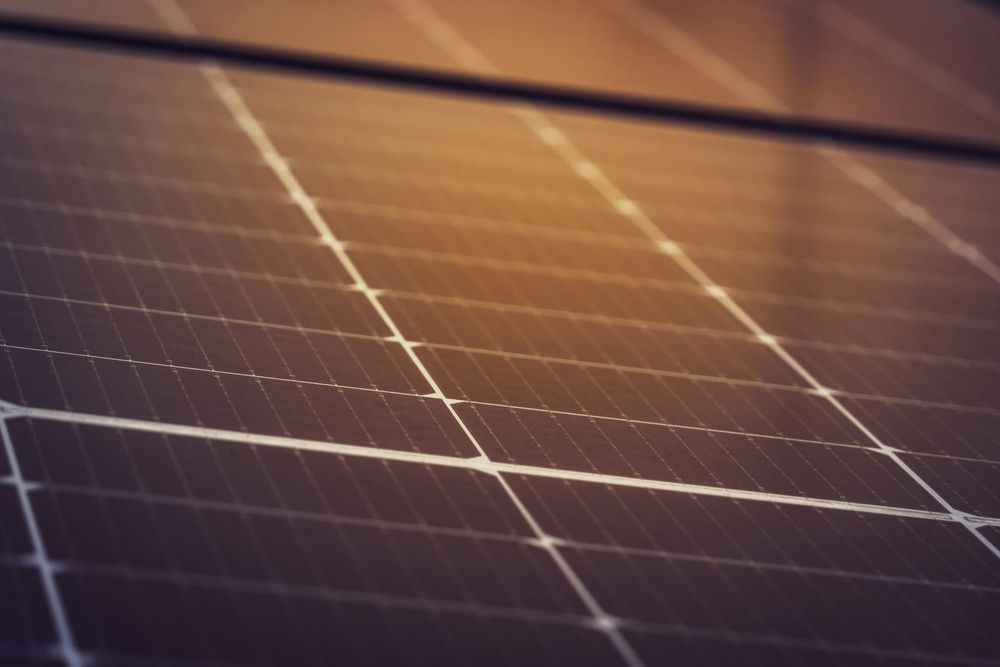

4. The Energy Math Doesn't Add Up

The United States could face a power shortfall of between 9 and 18 gigawatts by 2028, equivalent to roughly 12 to 25% of the electricity required to support projected AI data center demand. Power consumption from U.S. data centers could triple by 2028 as AI infrastructure expands.

Evidence: Traditional data centers, which rely on the existing power grid, can no longer support the multi-gigawatt demands of AI. Some companies are repurposing former Bitcoin mining facilities into high-performance computing sites for AI workloads. Others are installing natural gas turbines or fuel cell systems directly at data center locations to generate electricity locally.

5. The "Shadow Grid" Is Already Forming

Major technology firms are making substantial investments in nuclear power to secure reliable, carbon-free electricity and circumvent limitations of the existing power grid. Data centers are increasingly investing in "off-the-meter" development, reducing strain on the overall grid by bringing their own generation sources like on-site solar.

Evidence: The average wait time for a grid connection in primary data center markets is already between four to six years, and up to 10 years in cities like Tokyo. This has created an emerging investment model around AI infrastructure that Morgan Stanley calls the '15-15-15' dynamic: data center leases lasting around 15 years, delivering roughly 15% yields, and generating about $15 per watt in estimated net value creation.

📊 Data & Trends: The Numbers That Tell the Story

- 36% increase in U.S. residential electricity prices since 2020 (12.76¢ to 17.44¢ per kWh)

- 9-18 gigawatts projected U.S. power shortfall by 2028

- 4-6 year wait for grid connections in primary data center markets

- 83% score achieved by GPT-5.4 on GDPVal benchmark

- 15-15-15 dynamic emerging in AI infrastructure investment

- 6.9x faster AI video enhancement performance in Apple's new M5 MacBook Air compared to M1

⚠️ Different Perspectives: The Controversy and Debate

The Backlash Is Growing

From rural Virginia to the Arizona desert, communities that once welcomed tech investment are now pushing back against data centers amid growing concerns that these facilities are straining local power grids and raising costs for everyone else. Advocacy groups and community members are protesting laws surrounding data centers, questioning whether households are subsidizing corporate AI ambitions.

Who's Really Footing the Bill?

A recent report from SemiAnalysis, a semiconductor research firm, argues that the expansion of data centers is only part of the story, claiming that market design and policy decisions play a greater role in energy price increases than AI infrastructure growth alone. The report contrasts PJM Interconnection (serving 13 eastern states) with ERCOT in Texas, where prices have remained relatively stable despite data center development.

Corporate Promises vs. Reality

Large technology companies have worked to assuage concerns with pledges to cover electricity costs for their projects. Microsoft outlined a five-point plan including covering additional electricity costs, followed by similar commitments from Anthropic. President Trump recently summoned AI executives to affirm the Ratepayer Protection Pledge. However, experts question these commitments given that hyperscalers have struggled to turn profits.

🧠 Strategic Implications: What This Means for Tech and Business

1. Energy Becomes the New Moore's Law

The primary constraint for AI growth has shifted from computing chips to the availability of reliable power. Companies that secure energy access will have a competitive advantage. We're seeing this play out with tech giants investing in nuclear power and building their own energy infrastructure.

2. The Rise of "AI-First" Hardware

Apple's M5 announcement shows hardware evolving to support AI workloads at the edge. With up to 6.9x faster AI video enhancement performance compared to M1, we're seeing consumer devices becoming capable of handling AI tasks that previously required cloud infrastructure. This could help alleviate some data center demand.

3. Economic Disruption Accelerates

Morgan Stanley warns that increasingly capable AI systems may act as a 'deflationary force' in the economy by allowing companies to automate tasks previously performed by humans. OpenAI CEO Sam Altman argues that AI could enable extremely small teams to build highly competitive companies—startups with only one to five people may eventually compete with much larger organizations.

4. Regulatory Pressure Mounts

Public backlash could prompt regulators to impose new rules on hyperscalers. The current administration's skepticism toward renewable energy commitments raises questions about how far sustainability pledges will advance. Companies that fail to address energy concerns risk facing regulatory intervention.

🧠 Key Takeaways: The Bottom Line

-

2026 is the inflection point: We're likely to see major AI breakthroughs, but they'll come with massive energy demands that could strain power grids.

-

Energy is the new bottleneck: Computing power is no longer the primary constraint—reliable electricity access is becoming the critical factor in AI development.

-

Infrastructure is evolving: The "shadow grid" of private energy generation is emerging as companies bypass traditional power grids.

-

Economic disruption is accelerating: AI's deflationary effects could reshape labor markets and enable smaller teams to compete with large corporations.

-

The backlash is real: Communities are pushing back against data centers, and companies must address energy concerns or face regulatory pressure.

The AI revolution isn't just about algorithms and models anymore—it's about megawatts and grid capacity. As we approach the predicted 2026 breakthrough, the companies that solve the energy equation will be the ones that shape our AI-powered future. The race isn't just for better AI; it's for the power to run it.