Model Comparison Series: GPT-5.2

OpenAI dropped GPT-5.2 in December 2025. The update focused on three main areas: reasoning, coding, and long-document handling. It also claimed roughly 30% lower hallucination rates compared to the previous generation. Let’s start with where it actually performs.

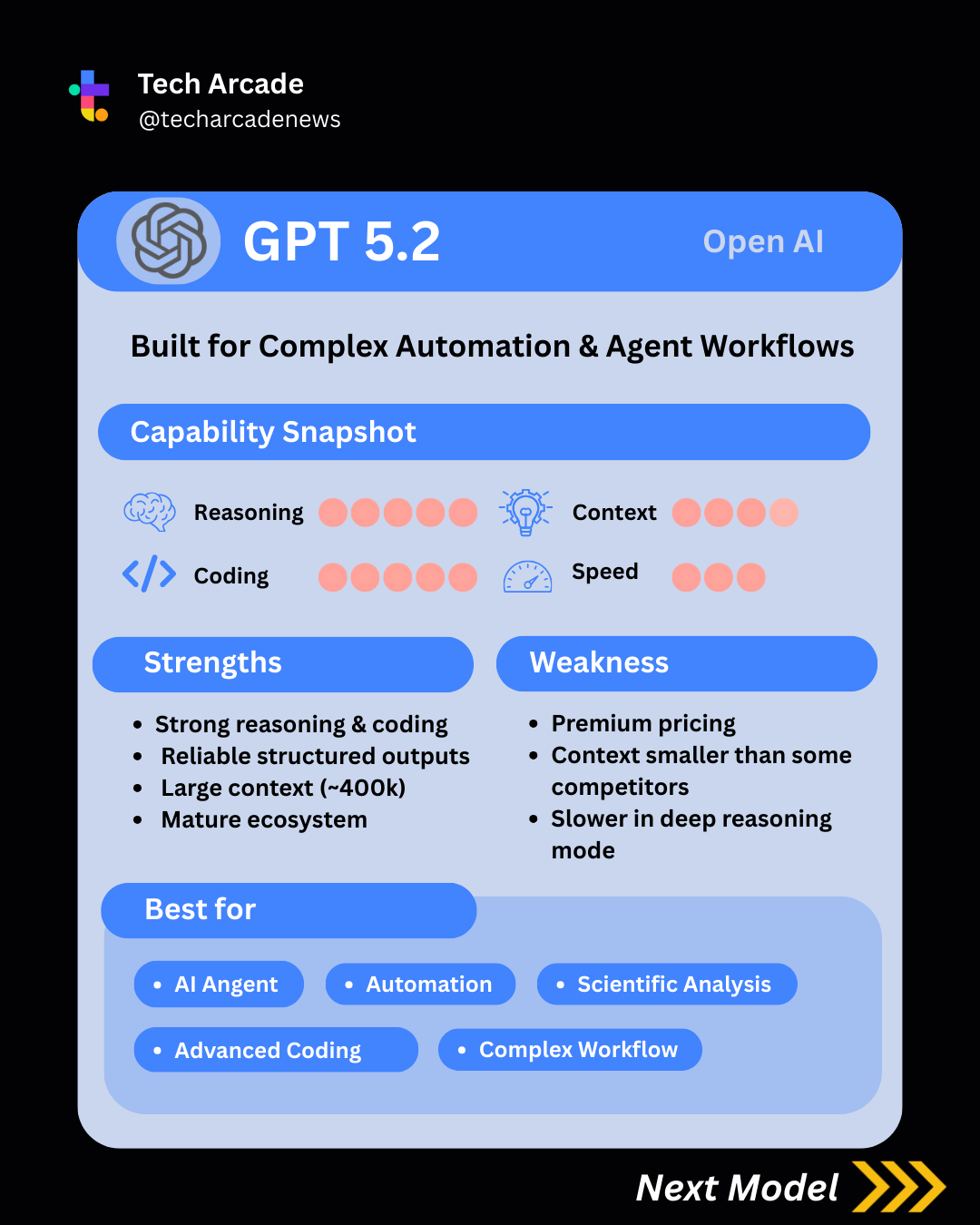

Strengths of GPT 5.2

- Strong reasoning & coding

We gave GPT-5.2 a 5/5 in both reasoning and coding. On benchmark trends, it continues to sit at or near the top tier. In reasoning-heavy evaluations like AIME-style math problems and broad knowledge tests like MMLU, it performs at frontier level. On coding benchmarks such as SWE-bench Verified, which tests real-world GitHub issue resolution rather than toy code snippets, it also remains highly competitive. More importantly, in structured prompt comparisons across multi-step reasoning and non-trivial coding tasks, GPT-5.2 tends to produce fewer logical gaps and fewer “almost correct” answers.

- Reliable Structured Output

Based on developer feedback and ecosystem adoption, GPT models are widely regarded as strong in:

- Tool calling consistency

- Valid JSON formatting

- Multi-step function chaining

- Very large context window (400k window)

GPT-5.2 supports extended context lengths of up to ~400k tokens (depending on variant). So it is specifically great for long document analysis, technical specifications, large codebase reasoning and extended research. Compared to earlier versions, GPT-5.2 improves long-context comprehension and introduces smarter context management.

To put the context size into perspective:

- Claude 4.5 is widely known for large context support, commonly cited around ~200k tokens in standard configurations.

- Gemini Ultra emphasizes long-context capability in enterprise tiers, with some configurations supporting very large input windows.

- Kimi K2.5 is positioned as a long-context specialist, particularly strong in large bilingual document handling.

- DeepSeek V2 and Grok 4.1 offer competitive context windows but are generally not positioned as the largest-context models in the market.

So while GPT-5.2 may not always advertise the absolute largest theoretical ceiling, ~400k tokens already exceeds what most enterprise workflows realistically require.

- Mature Ecosystem

Over the past few years, OpenAI’s models have basically become the default in a lot of AI stacks. Not just because they’re the smartest, but because they’re the most widely adopted. And GPT-5.2 benefits from that.

The ecosystem around it is huge. There are already tons of tutorials, integrations, agent frameworks, debugging threads, and production case studies. If something breaks, you’re rarely the first person to experience it.

That matters more than people think. It means better support for things like:

- Tool and function calling

- Structured JSON outputs

- Agent workflows

- RAG pipelines

- Enterprise deployments

You’re not experimenting alone. There’s infrastructure built around it.

So even if another model slightly edges it out on a specific benchmark, GPT-5.2 often feels safer to ship. The ecosystem reduces risk.

And in real production environments, reducing risk usually beats chasing marginal gains.

Limitations of GPT 5.2

Now let's talk about some limitations.

- Premium Pricing

As the latest flagship model, GPT-5.2 also sits at the higher end of the pricing spectrum.

GPT-5.2: $1.75 input / $14 output (per 1M tokens)

For comparison:

| Model | Input ($/1M) | Output ($/1M) |

|---|---|---|

| GPT-5.2 | 1.75 | 14 |

| GPT-5 / 5.1 | 1.25 | 10 |

| Gemini 3.1 Pro | 2.00 | 12 |

| Gemini 2.5 Pro | 1.25 | 10 |

| Kimi K2.5 | 0.60 | 3 |

| DeepSeek V3.2 | 0.28 | 0.42 |

| Grok-4.1 Fast Reasoning | 0.20 | 0.50 |

That means if your workload is output-heavy, GPT-5.2 becomes noticeably more expensive at scale. But note that per-token pricing is only part of the story. A model that produces more accurate outputs in fewer retries can still be cheaper in practice.

- Not the Absolute Context Leader

While ~400k tokens is large, it is not the highest theoretical ceiling in the market. Gemini 2.5 Pro and Gemini 2.5 Flash both have 1M tokens. In practice, 400k is more than enough for most workflows. But if your entire value proposition depends on ultra-long document ingestion, you may want to compare carefully.

- Slower in Deep Reasoning Mode

The heavier reasoning variants are powerful, but they are not lightweight.

When you enable deeper reasoning:

- Latency increases

- Token usage increases

- Costs increase

For deep research or complex coding tasks, that tradeoff may be worth it.

But for:

- Simple Q&A

- Fast conversational flows

- Lightweight UI assistants

It can feel slower than necessary.

Some teams actually prefer lighter models for front-end user interactions and reserve GPT-5.2 for backend intelligence.

Best for

- AI agent orchestration

- Multi-step automation

- Complex coding workflows

- Structured output pipelines

- Long research synthesis

Overall

GPT-5.2 is clearly one of the strongest general-purpose models in the market right now.

With top-tier reasoning performance and a large context window, it’s particularly well-suited for long-form, high-stakes document analysis, complex coding tasks, and multi-step automation workflows.

It feels built for depth and reliability rather than speed or charm.

That said, it comes with premium pricing, and its tone can feel slightly “cold” or clinical compared to some competitors. If you’re building conversational or personality-driven applications, that may matter.

In short: powerful, stable, and production-ready, but not cheap, and not always the most personable option.