Model Comparison Series: Claude 4.5

Anthropic released the Claude 4.5 model family between September and November 2025, starting with Claude Sonnet 4.5 on September 29, 2025. This family includes 3 main models:

- Claude Sonnet 4.5 (Sept 29, 2025): Announced as a top-tier model for coding and complex agent tasks.

- Claude Haiku 4.5 (Oct 15, 2025): Released as the fast, cost-efficient model in the family.

- Claude Opus 4.5 (Nov 24, 2025): The flagship model, noted for superior coding, computer use, and reasoning.

Compared to the previous 4.0 model, it has ~67% lower costs, 50-75% fewer tool-calling errors, and superior coding performance, including a record-breaking 80.9% on SWE-bench Verified for Opus 4.5.

While some models compete on speed or multimodal flashiness, Claude’s focus has been slightly different. It aims to be the model you trust when the task involves long documents, careful reasoning, or analytical writing.

In this article, let's dive in the pros and cons of using Claude 4.5.

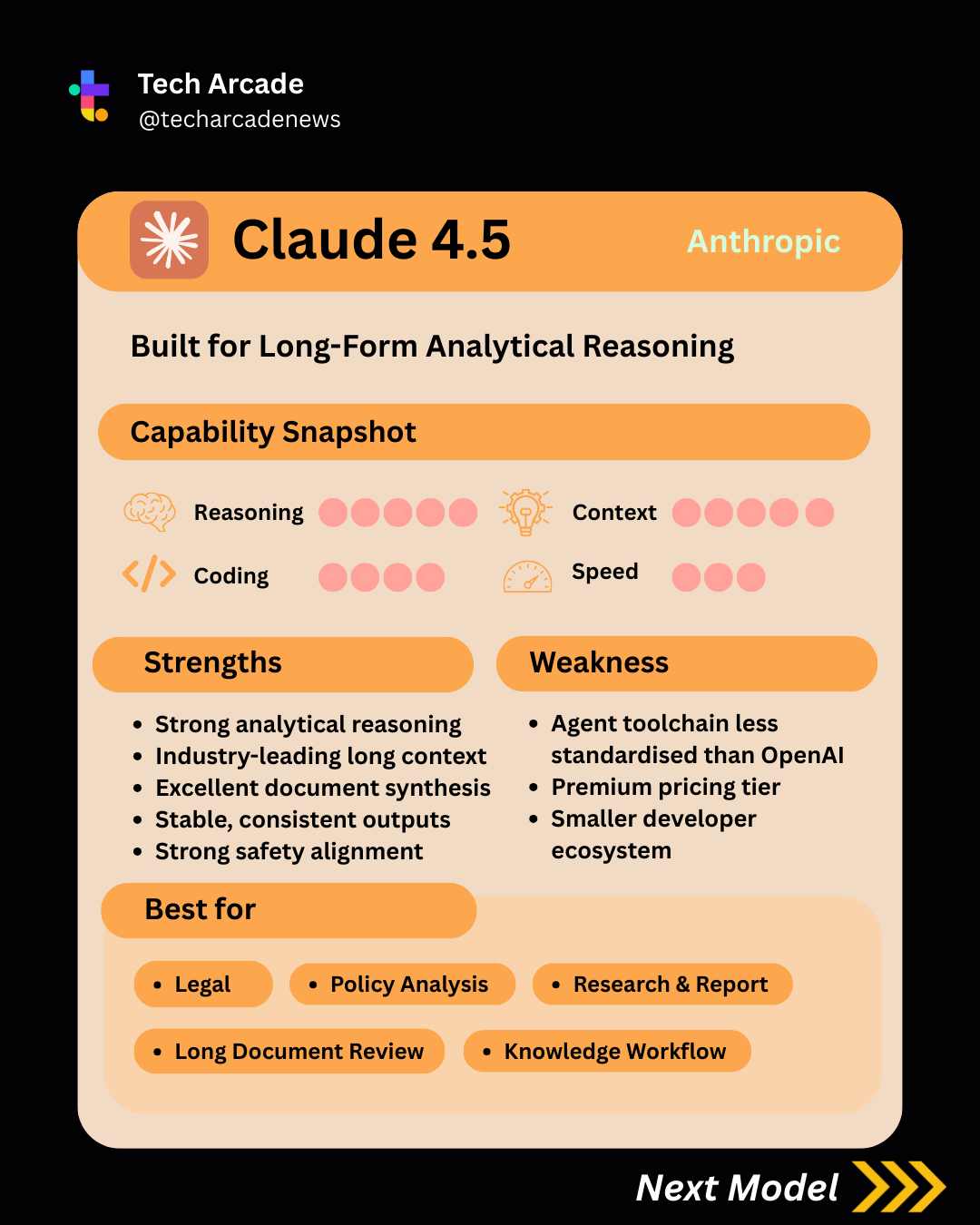

Strengths of Claude 4.5

- Strong analytical reasoning

Claude models have long been known for careful, step-by-step reasoning, and Claude 4.5 continues that trend.

Across benchmark trends such as MMLU-style knowledge tests and reasoning-heavy evaluations, Claude consistently performs at the frontier level. (You can check out this MMLU-Pro leadership board published by Hugging Face. It stands at the top of the table, right after GPT 5.2.)

Where it often stands out is analytical depth.

In structured prompts involving:

• long research papers

• policy analysis

• multi-source document synthesis

Claude tends to produce responses that are more structured, more cautious, and more logically organized.

It doesn’t always try to jump to the fastest answer. Instead it tends to walk through reasoning in a more deliberate way.

For tasks like legal analysis or research summaries, that style can actually be a big advantage.

- Industry Leading Long Context

Claude 4.5 is widely known for handling very large context windows, commonly cited around ~200k tokens in standard configurations, with larger limits to 1M reported in some deployments.

In practice, this makes Claude particularly strong at tasks like:

• long research reports

• essays and analytical writing

• technical documentation

• multi-document summaries

Compared to many competitors, Claude’s outputs often feel more coherent and structured over long passages. It tends to maintain tone, argument flow, and formatting better when responses stretch into thousands of words.

Where some models start to drift or repeat themselves in long outputs, Claude usually stays consistent and readable. Instead of chunking documents into many smaller prompts, developers can often feed entire documents directly into the model.

That’s one reason it’s commonly used for knowledge work and writing-heavy tasks, especially when the goal isn’t just generating text, but generating text that still makes sense ten paragraphs later.

- Document Synthesis

Claude 4.5 is particularly strong at document synthesis, which means it performs well when multiple long sources need to be read, compared, and combined into a coherent summary or analysis.

This becomes especially valuable in workflows where the model needs to:

• read several documents at once

• identify overlapping themes or contradictions

• extract key arguments or data points

• produce a structured synthesis rather than a simple summary

For example, Claude can take multiple research papers, policy documents, or reports and generate a consolidated analysis that highlights the main ideas, differences between sources, and overall conclusions.

Part of this capability comes from its large context window, which allows entire documents to be processed together instead of splitting them into smaller chunks. When documents remain in the same context, the model can better track relationships between sections and avoid losing important details.

- Stable and cautious outputs

Anthropic has invested heavily in alignment and safety training, and this tends to show up in Claude’s behavior.

Claude outputs are often:

• more cautious

• less likely to hallucinate confidently

• more transparent about uncertainty

This can sometimes make Claude feel slightly conservative, but in fields like legal, policy, or compliance work, that cautiousness is often exactly what users want.

It tends to behave more like a careful analyst than an overconfident assistant.

Limitations

Despite its strengths, Claude also comes with a few trade-offs.

- Smaller developer ecosystem

Compared to OpenAI, Anthropic’s ecosystem is still smaller.

There are fewer:

• tutorials

• third-party integrations

• community debugging resources

• pre-built agent frameworks

That gap has been shrinking quickly, but OpenAI still benefits from years of head start in developer adoption.

For teams building complex AI infrastructure, the ecosystem around a model can matter almost as much as the model itself.

- Agent tooling less standardized

Claude performs very well in reasoning tasks, but historically it has been less dominant in agent-style automation frameworks.

While Anthropic has introduced tool-use capabilities, many agent frameworks were originally designed around OpenAI APIs and conventions.

That means Claude can sometimes require a bit more custom integration work depending on the stack.

- Premium pricing tier

Claude also sits in the premium tier of AI model pricing. As shown in the table below, it is more expensive than several of the other models included in this comparison. For small workloads this may not matter much. But at large scale, pricing differences start to add up quickly. Running long documents, repeated queries, or production pipelines can significantly increase costs.

- Claude Opus 4.5: $5 input / $25 output (per 1M tokens)

- Claude Sonnet 4.5: $3 input/$15 output (per 1M tokens)

- Claude Haiku 4.5: $1 input/$5 output (per 1M tokens)

| Model | Input ($/1M) | Output ($/1M) |

|---|---|---|

| GPT-5.2 | 1.75 | 14 |

| GPT-5 / 5.1 | 1.25 | 10 |

| Gemini 3.1 Pro | 2.00 | 12 |

| Gemini 2.5 Pro | 1.25 | 10 |

| Kimi K2.5 | 0.60 | 3 |

| DeepSeek V3.2 | 0.28 | 0.42 |

| Grok-4.1 Fast Reasoning | 0.20 | 0.50 |

- Slightly slower conversational flow

Claude’s reasoning style is often more deliberate and verbose.

That can be helpful for analytical tasks, but in fast conversational applications it may feel slightly slower or more detailed than necessary.

For quick chat interactions or lightweight assistants, some teams prefer faster, lighter models.

Best for

Claude 4.5 performs particularly well in:

• legal analysis

• policy evaluation

• research synthesis

• long document review

• analytical writing

• knowledge workflows

If the task involves reading a lot, thinking carefully, and producing structured explanations, Claude is often a strong choice.

Overall

Claude 4.5 positions itself slightly differently from many other frontier models.

Instead of focusing purely on speed or flashy multimodal capabilities, it emphasizes analytical depth, long-context reasoning, and writing quality.

For tasks involving documents, research, and structured thinking, Claude often feels like working with a careful analyst rather than a fast chatbot.

That said, the smaller ecosystem and slightly slower conversational style mean it may not always be the first choice for agent-heavy automation systems or real-time chat products.

In short:

Thoughtful, analytical, and excellent with long documents, but less focused on automation speed.