Category: Future Tech / Emerging Trends — Sunday, May 3, 2026

—

🔥 WHAT HAPPENED

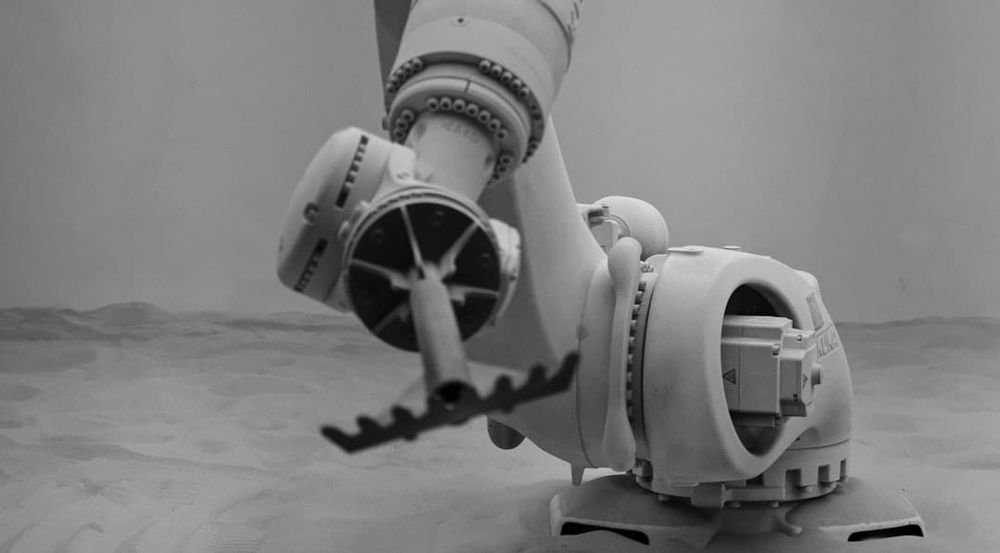

A robotic claw hurtles toward a lightbulb on a table. Any robotics journalist would brace for the crunch. But instead of smashing through it, the claw decelerates, starts pawing around the table like someone searching for glasses on a nightstand, gently positions the bulb between two pincers—and screws it into a nearby socket.

This isn't science fiction. It's Eka, a Cambridge, Massachusetts startup founded by MIT professor Pulkit Agrawal and ex-Google DeepMind researcher Tuomas Haarnoja. And according to Will Knight, a veteran WIRED reporter who has covered robots for over a decade, it's the first time he's ever seen a machine move "naturally."

"No robot arm on the market today can screw in a light bulb," Knight wrote. "Until now."

—

🧠 WHY THIS MATTERS

Robots have been doing factory work for decades. They weld car frames, stack pallets, and assemble circuit boards with inhuman speed. But these are pre-programmed motions for controlled environments. Give them a chicken nugget that's slightly the wrong shape, a lightbulb that's slightly slippery, or keys that jingle—and they fall apart.

Dexterity has been robotics' hardest unsolved problem. The human hand has 27 degrees of freedom, thousands of touch receptors, and a brain that's been evolving to control it for millions of years. Replicating that in silicon and steel has humiliated some of the smartest engineers on the planet.

Eka's breakthrough is that its robots don't just follow instructions. They adapt. They fumble, recover, and learn. The claw I described above? It dropped the bulb multiple times before succeeding—each time chasing it across the table, repositioning, and trying again. That's something no industrial robot does.

—

📊 DEEP DIVE

Here's what makes Eka different from every other robotics company:

1. Simulation-first training. Eka's robots learn entirely in virtual environments—thousands of hours of practice inside simulated worlds where they invent their own solutions. This is closer to AlphaZero (the DeepMind program that taught itself chess and Go) than it is to typical robot training, which relies on humans demonstrating tasks.

2. Proprietary "vision-force-action" models. Agrawal and Haarnoja have developed custom grippers with built-in touch sensors and a new AI algorithm that learns from simulations incorporating realistic physics—mass, inertia, friction. The model learns how movement affects pixels AND how weight and speed interact with objects it grasps.

3. Closing the "sim-to-real gap." OpenAI famously tried this approach with Dactyl (a robotic hand that solved a Rubik's Cube in 2018). But Dactyl fell apart when conditions changed—if the cube slipped, it couldn't recover. OpenAI abandoned robotics shortly after. Agrawal got a one-hour lecture from a former Dactyl team member explaining why his approach "would never work." He ignored it.

4. The chicken nugget test. Eka's most impressive demo involves sorting chicken nuggets strewn across a table into moving take-out containers. It works at impressive speed with human-like improvisation—sometimes placing nuggets carefully, other times almost tossing them in if the container is moving out of reach. Food handling is still heavily reliant on human labor because no two pieces of produce look the same. Eka's robots don't care.

—

⚠️ THE CATCH

Eka's founders won't reveal exactly how their system works—it's their commercial edge. That makes independent verification difficult. Knight compares them to GPT-1, OpenAI's first large language model: "often incoherent but showing glimmers of general linguistic intelligence."

Several big questions remain:

- Scale. Can this approach generalize to thousands of tasks, not just demos? Agrawal believes it's a question of training time and better actuators. But "just scale it up" has been the robotics industry's favorite excuse for decades.

- Speed. The robots are impressively fluid, but they're still slower than human hands. Can they match the 10,000 repetitions per shift that a factory worker does without injury?

- Competing approaches. Some experts argue that combining human demonstration with simulation will yield better results than simulation alone. A wave of well-funded startups are training "vision-language-action" models by having humans wear motion-capture gloves and fold T-shirts for hours.

- The hardware bottleneck. Even the best AI is useless without precision hardware. Eka has built custom grippers, but manufacturing them at scale is a completely different challenge from lab demos.

—

🎯 WHAT HAPPENS NEXT

Eka believes they're halfway to solving dexterity. "Some people want robots to be human-level," Agrawal says. "For us, the goal is superhuman."

If they're right, the implications are staggering:

Manufacturing: Building an iPhone requires fiddly dexterity that currently demands human assemblers. Agrawal says the same approach should work for finer manipulation—just need different actuators and more simulation time.

Food service: Restaurants are desperate for staff. Robots that can handle produce, fry chicken, and plate dishes could reshape the industry.

Healthcare: Dexterous robots that can handle surgical instruments or assist with rehabilitation.

Households: "Trillions of dollars flow through the human hand," Agrawal says. "This is the biggest problem in the world to be solved." Household robots that can fold laundry, wash dishes, and screw in lightbulbs are the holy grail.

—

🧩 BIGGER PICTURE

We've been promised household robots for fifty years. Every decade, a new demo raises hopes, and every decade, the promise fades because the robots still can't tie shoelaces or pick up a slippery apple.

But something shifted this week. When a journalist who's covered robots for a decade says he's never seen anything like it—and compares it to his first interaction with ChatGPT—it's worth paying attention.

The ChatGPT moment for the physical world may not have arrived yet. But Eka's claw, fumbling for a lightbulb on a table in Cambridge, Massachusetts, looks an awful lot like the opening scene.

—